My post will discuss the sociology of beliefs, values and attitudes to describe the cultural, institutional and historical ways in which the public has engaged with science. I present two case studies of “hot topics” that usually draw anti-science comments. I show how cultural beliefs about trust and risk influence the extent to which people accept scientific evidence. I go on to discuss how sociology can help improve public science outreach.

Beliefs: Case study of gender inequality and the environmental movement

In social science, the concept of belief describes a statement that people think is either true or false. Beliefs are deep rooted because they evolve from early socialisation. They are maintained tacitly through everyday interactions with our primary social networks like family, religious communities, and through close friendships with people from the same socio-economic backgrounds. Beliefs are hard (though not impossible) to change because there is a strong motivation to protect what we believe. Beliefs are strongly tied to personal identities, culture and lifestyle. Beliefs are harder to change in a short frame time because they’re interconnected to structures of power and inequality. Chipping away at one belief means re-evaluating all beliefs we hold about what is “true,” “natural,” and “normal.”

Beliefs are hard to justify objectively because they represent the social scaffolding of all we take for granted.

In this meaning, beliefs represent the status quo of what we’re willing to accept. If we ask people: Why do you think your belief is correct? They’ll often answer on “gut instinct” or they’ll defer to common sense. This is true because everyone knows it! For many people, belief is either an act of faith that does not need scientific proof, or otherwise, people find evidence for their beliefs everywhere they look, even though this does not include looking to empirical data. In many cases, however, people will also refer to authoritative texts to back up their beliefs, like religious teachings or news reports.

The key to understanding why beliefs are hard to shift comes down to one question: Who benefits from this belief? For example, when we ask: Are men and women fundamentally different? Someone who benefits from patriarchy and doesn’t want to lose their gender privilege will say: “Of course, men and women are different, look around you! Women act this way and they’re from Venus; men act that way and they’re from Mars.” A social scientist will bring up examples from other cultures where gender is organised differently. Still, the other person will see these examples as exceptions to their rules about gender.

Gender differences are used to justify inequality: women are more caring, so they’re better at raising babies; men are more rational, so they make better leaders. To think otherwise means restructuring our labour arrangements at home and work; it means rethinking social policy about how we remunerate jobs; it means changing the balance of power in the law, in education, in the media and so on.

When people don’t believe scientists on climate change or genetically modified (GM) foods or vaccinations, it comes down to their assessment of: What does this mean for me? What life changes are required of me? How does this scientific knowledge undermine my place in the world? In other words: how does this science support or threaten my values?

Values: Case study of the adoption and resistance of GM foods

Values are linked to one’s sense of morality. Where belief is something maintained at the individual level through agents of socialisation, values are more easily distinguished through their connection to broader social interests. Values relate to the standards of what individuals perceive to be “good” or “bad” in direct reaction to what our society deems to be “good” or “bad.” Values are shaped by cultural institutions like education and religion. Societies depend on shared values to maintain social order, which is why many societal values are often enshrined in law. Traditional societies appeal to values of authority like religion or authoritarian leadership. Christian capitalist societies appeal to values of individualism and economic rationalism. Social democratic societies are more secular and informed by humanist values. All of these underlying value systems impact on public values of science.

Still, values are contested, depending on whose social interest is being served.

Take for example GM foods. In Sweden, public consultation and scientific input is framed around best interests for public good. (Not without controversy.) Some Scandinavian laws will allow GM food to be grown in controlled areas because it benefits their national economy, but they won’t support imported GM foods. In countries like Peru, GM foods have been banned for 10 years because they mostly come from imported products. These products are deemed to be unsafe. At the same time, GM foods conflict with class struggles of the highly political Indigenous farming movement. Peru has a long history of mistreating Indigenous populations, but the national identity is firmly locked to agrarian innovation that stretches back to the Incan empire. Peru has also joined other Latin nations to wind down trade with the USA and increase trade with Asian nations. Resistance to GM foods serves a dual economic and political purpose of resisting cultural imperialism and supporting Indigenous farming movements. (Though Indigenous environmental protests are ignored in other areas, such mineral resources and specifically big oil.) The key here is the political economy of Peru (and Latin America more broadly) is informed by socialist values that resist capitalist interests. GM foods have publicly been positioned as part of this capitalist incursion.

In the USA the GMO public debate has been framed around commercial interests. This stems back to early industrial era and the plant patent act of 1930. The commercialisation of American agriculture goes back to the early 19th century, where many farming communities were self-sufficient. By the early 1920s differentiated crop varieties were already established. Trade associations arose as mass production started. With more money at stake, legislation steps in to formalise the production of seeds. Historical evidence shows that the law struggled to weigh up the commercial lobbying of large agricultural organisations (specifically the American Association of Nurserymen) versus the rights of small farmers. At this time, when economic rationalism was beginning to set precedents, commercial interests won out over collective interests. This isn’t simply a case of greed of corporations, this is about the political economy of early American society. American values were firmly tied to Protestant beliefs (sociologist Max Weber has detailed this thoroughly). In early American capitalism, it was God’s will that people should work hard and make as much money as possible, to ensure their place in heaven. The 1930 plant patent was fought heavily on moral and social grounds.

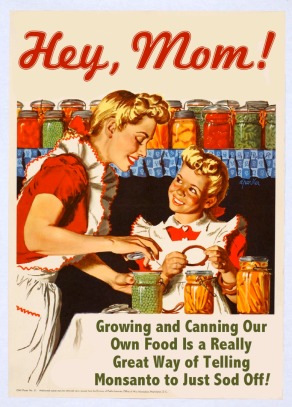

Today, these tensions around values and commercial interests often feature in GM food debates (see for example various highly emotive comments to our Science on Google+ Community). Invariably, people bring up Monsanto. I’m not a fan of Monsanto. In fact, I see them operating as a neo-colonialist corporate machine. Then again, I can accept that the practices of one entity do not nullify the potential benefits of GM foods altogether. I want to learn even more about the science and debate the findings robustly. Anti-science members of the public, however, cannot accept that scientific methodology of GM foods can be separated from commercial interests to help feed underprivileged people.

This is glaringly obvious when we have scientists discuss the not-for-profit scientific collective that have developed Golden Rice, a type of rice that is engineered to be rich in Vitamin A. In our Science community, we have people dismissing the potential benefits of Golden Rice, because they don’t believe that the science can remain commercially impartial. These people see only conspiracy and greed behind the motivations of scientists. Otherwise people argue that this technology is unsafe, though it’s been tested (though not without controversy over informed consent). People also get very angry that Golden Rice doesn’t address the social causes of poverty, such as corruption and social inequality. Golden Rice doesn’t claim to solve world poverty; it aims to address Vitamin A deficiency in developing regions.

People think that science should solve all the world’s problems, when in reality we tackle one aspect of one specific problem at a time.

Debates about GM foods are, in fact, a cultural battle over value systems. Are capitalist nations like America still deeply invested in individualistic values or can we move towards collective action? Scientists make the case that some GM food technologies represent a safe, relatively inexpensive way to address hunger. In order to accept this argument, the public needs to be able to trust that the science isn’t governed by commercial entities. How you see this depends on your values: are GM foods “good” or “bad”? A vocal section of the public is distrustful of science on GM foods. They think GM foods are bad. As I’ve shown this is both the outcome of history, culture and social changes. Different countries have different positions which influence whether people largely celebrate, decry or feel anxious about GM science.

In these cases, the science is used to draw very different conclusions. GMOs are either for or against the national interest. GMOs either support social change or they impede progress. Attitudes towards the science depends on the cultural and political interests of different social groups. What’s “good” or “bad” about GM foods depends on whose point of view best aligns with societal values.

Attitudes: Case study on perceptions of risk and trust

Attitudes are relatively stable system of ideas that allow people to evaluate our experiences. This includes objects, situations, facts, social issues and other social processes. While attitudes are relatively stable, they are more superficial than beliefs and less normative than values. Attitudes can be changed more easily than beliefs. Sometimes people will say one thing, especially if it’s socially desirable to do so, but in private they may not adhere to that attitude. Someone can say they support equality but they may not practice it at home. Often times, however, people are not always aware that their attitudes are contradictory.

In contrast to values, which are culturally defined, attitudes are interpersonal. We are constantly interpreting other people in relation to the situation we find ourselves in. Language, motives, emotions and relationships can change attitudes over time. Social context also matters: cultural beliefs and values can influence whether or not attitudes change.

Attitudes about science are shaped by many societal processes, such as education, class, ethnicity and so on. Yet the social science literature has overwhelmingly shown that attitudes towards science are connected to:

- Whether or not people are willing to accept the risks associated with a particular scientific issue; and

- Whether or not people trust scientists in general.

Trust is a multi-dimensional concept; that is to say, it is made up of many different characteristics and these change with respect to a given social group in a particular time and place. Psychologist Roderick Kramer provides an extensive review of the empirical research on trust (he covers studies from the 1950s to 1999). At the interpersonal level, we develop trust in another person based on a belief that they have an interest to live up to our expectations. They care about us, they need us, they have a legal or moral obligation to help us. Among individuals, trust is about behaviour and reciprocity: I’ve proven you can trust me because I have not let you down and because we both understand that our trust goes both ways.

Some individuals can be generally considered to be “high trusters” and others “low trusters,” depending on their personal biographies; their experiences with institutions of authority; and other socio-cultural factors. People who are generally predisposed to the idea of trust are more likely to be open to collective social action. The reverse is true of people with low trust.

While Kramer doesn’t make this connection, low trust and commitment to social action impact on the public’s trust in science. If I hold a strong belief about the world and science contradicts my view, I will need a high degree of trust in order to be open to the information. If I take on board this scientific view, I will be forced to act on it. This means changing the social order that benefits me currently. That’s a big investment. If I have high trust in another oppositional social institution like religion or my community leader who is supporting my current belief system, why should I trust science?

At the societal level, trust doesn’t always work in relation to direct interpersonal engagement. Kramer shows how some people in certain circumstances will trust authority figures based on their history. That is: I trust this organisation because they have a strong reputation and other big players endorse them. Others will trust due to someone’s category of authority (science, politics), their role (medical practitioner, priest), or a “system of expertise” (bureaucratic management).

People who trust an authority figure or an organisation’s motives are more likely to accept outcomes, even if they are negative. Trust will matter more when people have a lot to lose, such as when an outcome is unfavourable. For example, when science will lead to social change or some new technological impact that I don’t want because it threatens my beliefs, livelihood, culture, identity or lifestyle – this requires high trust.

Research shows that public trust in social institutions has long been in decline. In America, civic trust was high just after WWII due to the nature of the war, its impact on the economy and other social changes. Public trust declined in the late-1960s due to the Vietnam war and other conservative economic and political changes. Public trust is generally at half the rate it was in the mid-1960s for federal government, universities, medial institutions and the media. Specific incidents become exemplars for more distrust, such as political scandals. Progress in technology is also related to higher distrust. People who live in relatively affluent, technologically advanced societies are more likely to distrust science. Up until the mid-1990s, the media was people’s main information source, but it was also the most distrusted social institution. The reverse was true of scientists: university researchers were trusted as experts, but people were less likely to be listening to them because they didn’t have much exposure to scientists.

In some cases, we might think that distrust in science is about lack of knowledge. Being familiar with a person or institution can help to engender trust, but not always. Again, it comes down to beliefs, values and attitudes.

People who are highly knowledgeable on a particular area such as politics are more likely to spend a lot of time taking in and responding to world views that contradict their own. These people have an information bias that they do not readily recognise. They will see themselves as rational and impartial. They see that they are sceptics ready to weigh up evidence as it comes to hand. In fact, they spend more emotional energy arguing against conflicting information because these clash with their personal world views. They argue passionately because their attitudes align with their beliefs and attitudes.

Conversely, people who know little about a topic are more likely to accept new information to be true or unbiased, but they show a weak commitment to defending this new information. Paradoxically, if people don’t feel personally invested on a social issue, they will not act. This suggests that more information or education on a topic alone is not enough to improve how the public engages in science or democratic processes.

Bluntly put, more public discussion on science alone is unlikely to convince people to productively engage in scientific discussions.

Even amongst scientists, trust in science and risk perception is affected by sociological processes. A 1999 study of the members from the British Toxicological Society finds that women were more likely to have higher risk perceptions for various social issues in comparison to men. This ranged from smoking, to car accidents, AIDS, and climate change. Looking deeper, it was a specific sub-set of White men who were more likely to perceive a low risk for these social issues; those with postgraduate qualifications who earned more than $50K a year and who were conservative in their politics. They were more likely to believe that future generations can take care of the risks from today’s technologies; they believed government and industry can be trusted to manage technological risks; they were less likely to support gender equality; and they were less likely to believe that climate change was human-made (bearing in mind climate change science has since developed further). These men had a higher trust in authority figures and they were less likely to support equality and social change. Why? Because being in a position of relative social power, they had the most to lose from social change on environmental, gender and political issues.

So if beliefs and values are so seemingly immutable, and attitudes mask underlying motives that people are unaware about, how do we increase trust in science to improve the tangible outcomes of public outreach?

Moving Forward: A case for public science

Beliefs are tied to personal identities and social status. People defend beliefs on the grounds that what they believe is true, obvious or simply “a given fact” because they have been socialised to do so since birth. Beliefs are tied to social belonging and social benefits, so there’s a lot at stake in defending them. Beliefs about equality align with the environmentalist movement because addressing climate change requires full civic participation. The beliefs of environmentalists also overlap with feminists as both groups want to see change in the social order. Beliefs are shaped by social institutions, but they can also be restricted by material constraints.

Values are normative because they are linked to powerful social institutions. Some scientific innovations are perceived to be inherently “good” or “bad” depending on how vested social interests are understood. In some contexts, GMOs are seen as bad because people think scientists can’t be trusted to separate methods from commercial interests.

Attitudes are interpersonal. They depend upon social exchange with particular people, but attitudes also reflect hidden or contradictory ideas that people hold. Public trust in institutions has been eroded since the late 1960s in different ways in different societies. People don’t trust scientists because for much of our history, our knowledge has been kept within academia. Most of the time when science reached the public, it was been reported on by the media, which is itself a mistrusted, though widely consumed, source of information.

Changing attitudes on “hot button” scientific issues is hard because people who seek out these debates already have pre-existing beliefs. Sometimes people think they’re being sceptical of a corrupt or unjust system when they take an anti-science perspective, as with the case of GM foods.

Most people view themselves favourably when it comes to social, economic, political and scientific issues. They think they’re impartial and rational, when really they’re just defending their place in the world.

Sometimes people already have a high distrust of institutions like medicine and science because they’ve been marginalised or abused by systems of power. The history of medicine has many horror stories, especially in connection to unethical treatment of Indigenous groups, minorities, women and the mentally ill. Unfortunately, science cannot simply hatch these up to isolated incidents of yore, as these cases directly impact on present-day public mistrust of scientists.

Rather than dismissing the past, it is more useful for scientists to understand how historical and cultural relations affect how people perceive the scientific community.

Better public education on science is only part of the answer. As we see, even amongst highly educated scientists, those with greater social power will happily acquiesce to higher authority. They are willing to take more risks with science and technology, but they are less supportive of equality and progressive social change where this threatens their beliefs and social position.

Supporting the public’s reflexive critical thinking is more important than simply pumping out science information.

Reflexive critical thinking

Sociologist Ulrich Beck argues that a general state of reflexivity might be part of the reason why people are so worried about technological and social risks in ways that are not especially productive.

Reflexive critical thinking is a methodology for knowing how to question information, as well as identifying and controlling for our personal biases.

How sociology can help

As Susanne Bleiberg Seperson and others have argued, sociology has a public image problem. The public doesn’t know what we do, let alone how we do it. We write in jargon. We are seen as too theoretical and not very practical.

The social sciences are well-poised to improve the public’s trust in science because our work is focused on the influence of social institutions on behaviour. We are not above critique on these grounds. My blog has regularly shown how even as we expand social knowledge of culture and inequality, Western social sciences can misappropriate minority cultures or exclude Indigenous voices.

Many of the anti-science critics are espousing cultural arguments without knowing it. This is where public sociology can really shine, by showing how inequality, social values and power affect how people engage with science.

Connect With Me

Follow me @OtherSociology or click below!

Discover more from The Other Sociologist

Subscribe to get the latest posts sent to your email.

This is really excellent work! I’m incredibly interested in the scientific community on G+ as one of the most accessible, and cross-disciplinary scientific communities I’ve encountered on my 20+ years online. I don’t know any other resource online where I can see (much less participated in) the actual practice of science (in all its forms) carried out as an everyday activity by an active community of thinkers. On G+, I can engage the scientists not simply as distant experts, but also as human beings with interests and personalities as real as anyone else I know.

I think it is incredibly special and one of the highlights of this social network. I’m wondering what precedent and inspiration the organizers of the community are drawing from, and what they imagine the community might grow into.

LikeLike

Hi Daniel Estrada Thanks for your encouraging comments on our Community! It’s really great to read that you’re enjoying this interaction aspect of Google+. I must admit I was drawn to Google+ as a researcher as I was specifically looking for interdisciplinary interaction. I’m active on Twitter, Facebook, and on various blogging platforms, but I don’t find them as satisfying. It’s hard to engage properly. The sharing features on Google+ and the way communities work are ideal for finding scientists. I’ve learned so much from chatting to other researchers here on Google+. It’s been incredibly inspirational.

As far as the precedent and inspiration for Science on Google+, our Community was founded by Chris Robinson so I’ll let him speak the motives. I can tell you that Chris has been very open to collaboration and input, plus he’s shown incredible vision in bringing together a strong group of Moderators from very different fields. Our team are all practising scientists who were frustrated to see science being misrepresented in the media. We are committed to elevating the quality of science posts. While we like gifs and memes, we see a place for in-depth discussion going beyond headlines and superficial soundbites. Google+ is, to my mind, the best way to pool together our collective efforts for public science.

LikeLike

+Zuleyka Zevallos – Yours is a very interesting article on a compelling topic. I apologize, but I have so many questions!

1) Is it reasonable to think that among all fields of endeavor, science stands the best chance of altering views among a low-trust population because published science includes comprehensive supporting data and thorough methodological descriptions? In essence, does science gain advantage in converting beliefs by allowing the reader to trust the data even if they don’t trust the individual scientist or organization?

2) Living in the U.S., especially in light of recent governmental and industrial intelligence scandals, I can anecdotally say that trust in authority and social institutions is generally very low. Is this distrust likely to bleed over into the sciences, since science is a sort of intellectual authority, or might science be viewed as so firmly based in logic and so rigorously structured as to be above reproach?

3) It is my nature to reside in dark places, and I find threats to science dims the light further. Is there reasonable hope for positive change, or, to paraphrase Max Planck, must we put forth ideas and wait an entire generation to see them flourish?

(actual Planck quote: A new scientific truth does not triumph by convincing its opponents and making them see the light, but rather because its opponents eventually die, and a new generation grows up that is familiar with it.)

In December Tom Nichols wrote about trust and the death of expertise in The War Room blog: http://tomnichols.net/blog/2013/12/11/the-death-of-expertise/. His view is a bit more emotional, but touches on some of the same points you relate here. By sheer coincidence I read both posts today, so I may be joining topics inappropriately (temporal bias?). I’ve also crashed embarrassingly into my own issues with expertise and personal bias in the last week. I’m getting educated, sometimes by great posts like yours, sometimes by force. But I’m learning, and hopefully becoming a better, more valuable person as a result.

Your writings help tremendously.

LikeLike

Michael Verona Thanks for these great questions!

1) Does science stand a better change of changing the minds of “low trust” individuals in comparison to other institutions? Not necessarily. It depends on where the lack of trust originates. People can distrust science due to personal experience with science, such as negative experiences with doctors, or other authority figures, such as the law. Some people can distrust science because of historical mistreatment, in the case of minorities, or because their needs are not being fully met by institutions that should be looking after them. I think about the anti-vaccination movement and its dubious connection to autism here.

Disenfranchised individuals set up or seek out their own peer groups in response to their alienation. This is good for emotional wellbeing, but may not be great for social progress when they give one another advice that contradicts prevailing research. In these cases, distrust in institutions is outweighed by science evidence. Science needs to do a better job of listening to disenfranchised/vulnerable groups. A different kind of public outreach is needed here, with researchers speaking directly to the concerns of marginalised and under-represented groups.

Low-trust groups need to be shown that science adds more value and relevance to their lives. Publishing out more science is, in itself, not enough.

2) Low trust in Government & intelligence agencies This is a really great point. In essence, yes, privacy issues especially related to political conflict hurts science overall. The figures I quoted above on the nosedive of public trust in science is directly the outcome of scandals like Watergate, the Vietnam War and other political and economic issues. While some people can separate science from politics, you’ve no doubt seen first hand on our Science on Google+ Community that people often say scientists are corrupt and that all research is dictated and shaped by Government. This is only true in the sense that public funding affects which science projects are supported, but the idea that researchers tweak their findings for Government is erroneous and damaging to public trust.

You’ll find that even within Government researchers do not bend their findings for political agendas. Politicians’ speeches and public policy often misuse science. These people are not researchers; their job is to support the Government of the day, so this is where the disjunct lies. This is another key area I’m devoted to: applied research. Scientists do collaborate on social policy, but not enough. This doesn’t mean being corrupted by Government, but rather shaping how decision-makers think about social, medical and technological problems using science. Rather than leaving policy makers to their watered down use of science, scientists can better inform social policy directly.

3) Do we have to wait a generation for change? No! Changes can and do happen within one generation. Think about the ubiquitous nature of technology we are currently witnessing. The first email I ever wrote was in my first year of university in 1997. I wrote it to my friend who was sitting beside me at the time. We didn’t know anyone else to email as none of our friends had email at home then. Here we are 16 years later and I’m writing to you from Australia while you’re in America! Technology changes social relations too: it enhances how people think about and use information. That Tom Nichols piece was terrific (I hadn’t read it before, thanks for passing it along!). As Nichols argues, thanks to technology, more people think they’re experts. This isn’t new; there have always been alternatives to communicating about science and politics. People have always sat around waxing lyrical about topics they don’t really know much about. Social media has simply proliferated the means by which people do this critique.

The spread of education, particularly for women, has had a rapid impact on the decrease of average number of births around the world. This has happened within less than one lifetime in various areas (a nice overview on TED http://goo.gl/KEt9Xn). Social change is slower in other cases. For example: why is racial inequality still so prevalent given the civil rights movement was in the 1960s? Why have we not eradicated gender equality all together given the feminist movement took off in the 1970s? Why are people still resisting climate change given environmentalism took off in the 1980s and 1990s? All of these changes are slower because lots of social institutions are interlocking their interests in opposing further progress: economics, politics, religion, education and so on.

In a sense, what Nichols says is true: more people reading crap science means more people perpetuating myths (e.g. “people only use 10% of their brains”). But if scientists take back control of these conversations and contribute to both public debate and social policy (as has been the case with birth rates), change will move quicker.

Thanks for your great comment!

LikeLike

Zuleyka Zevallos – Thank you so much for your thorough and reasoned response to my questions! I love the way you write and your dedication to science outreach.

On topic:

1) We can address ignorance with education and through outreach, but entrenched and reasoned disillusionment, as in those minorities previously abused in the name of science, seems a very difficult challenge indeed. The soul-crushing and criminal U.S. Tuskegee Experiment comes to mind (https://en.m.wikipedia.org/wiki/Tuskegee_syphilis_experiment). This began before my parents were born and continued into my lifetime. The distrust sowed reverberates in some minority communities to this day. I do not know how to correct this, although complete, end-to-end transparency might help.

2) Carl Sagan said, “We’ve arranged a society based on science and technology, but in which no one knows anything about science and technology.” We need scientists in politics, both as advisors and as representatives. As much as I love and depend upon science, I do not know how to make this idea popular enough to win elections, especially in a country as backwards as the U.S. where magical ideas prevail among the voting populace.

3) I have to share an internet anecdote with you: back in the early 1980s a friend and I were exploring dial-up BBSs and stumbled across some esoteric and undocumented connection information. Being kids, we tried it out (for science!) and found ourselves on an ARPANET node (the predecessor to the internet) lurking in a discussion among physicists working for NASA. Lurking was harder back then, with few participants and a sequential text interface, and we got caught. Our panic and thoughts of visits from the FBI were quickly replaced by welcomes from the scientists, and the general consensus that if we were clever enough to get in, we deserved to stay and visit. For about two hours we talked physics as best we could, asked lots of questions and received lots of answers. We were already science enthusiasts, builders of electromagnets and commanders of chemistry sets, but this was something completely different. We were welcomed, without advanced degrees or grown-up jobs. We met the meritocracy of science, and it was breathtaking.

I’m sad to say that the hope engendered in that moment of generosity and inclusiveness has all but faded. I grew up, becoming myself under the constant threat of nuclear war, the incessant drumbeat of racism and sexism and hate, the perversion of religion into politics, and the replacement of science with mythology. I still raise my voice, perhaps from habit, certainly more with anger than with hope. I want to believe that we can become enlightened through knowledge, understanding, and inclusiveness, but the evidence…suggests otherwise. I hope coming generations have a more positive effect. Perhaps this very forum is the beginning.

Thank you for your insights and contributions. Every time you invite someone in, every time you engage with them, you make the world incrementally brighter.

LikeLike

Michael Verona Thanks for your thoughtful and kind words. Yes Tuskegee was appalling. Similarly, the field of Gynaecology has a lurid history. On my Tumblr, I responded to someone writing about J. Marion Sims’ experiments on enslaved women. He performed awful experiments without anaesthetics, believing African-American women had a higher tolerance for pain because of his racist beliefs that minorities were a lower life form. I then added a comment on the history of the oral contraceptive pill which left the women in a town in Puerto Rico either sterile or their children are born with deformities (http://goo.gl/mChzcj). While these experiments advanced modern medicine, they are just two examples of why minority and vulnerable populations are sometimes wary of what science will do to them. Interesting you mention Carl Sagan, I have a post coming up on him.

I don’t think we’ve lost the fight just yet, Michael! With more public outreach, I hope that people in power will see greater value in science and perhaps we will have stronger political influence in policy roles. Science advisory committees exist of course, but we can be doing more to shape social policy. The Carl Sagans of the world are few and far between. For a long time, scientists engaged with the public remotely. Social media is changing this. I see only opportunity for improvement!

That’s such a great story about ARPANET! Wow, that must have been so awesome to chat with those scientists. Great hacking anecdote, but even lovelier example of outreach with the scientists answering all your questions!

Don’t take my post above to mean that change can’t happen. Some people’s beliefs are threatened by science because they feel they have a lot to lose. It is the job of science outreach to show people that’s not the case. Agreed that every door opened to communication brightens the world!

LikeLike

Zuleyka Zevallos – I admire and envy your optimism. Optimism and trust are not parts of my belief system, unfortunately, so I depend on energetic optimists like you and the other maintainers of this community to carry the proverbial torch. You have my usually tacit and sometimes vocal support, but I’m not the person to carry the hopeful message forward. I’m neither literally nor figuratively qualified. And I’m very tired in a threadbare sort of way.

Regardless, I toast you makers of progress:

May your cause be true and just,

May you earn the public’s trust,

And may the changes that you make

Improve the world.

LikeLike

Michael Verona Are you saying you’re a “low truster”? Jokes aside, I understand where you’re coming from! It’s great that you engage in these conversations even if you’re not feeling very optimistic. And thanks for your beautiful words of encouragement!

LikeLike

Zuleyka Zevallos – I am definitively “low trust.” I engage in these conversations because somewhere there may be a spark of hope, and I feel every obligation to encourage it.

LikeLike

Science that isn’t under attack isn’t science, it’s advocacy. Scientists that complain that they are attacked and who hide behind the mantle of science, the mantle of peer-review, the mantel of moral indignation that the public is hesitant to accept what they are unwilling to debate/discuss in a public forum are not scientists. They are advocates. Science is a dialectic process limited only by the methodologies of science. When one side in in the continuing argument starts calling the other side deniers and when that same side refuses to discuss the reproducible experimental evidence that, supposedly, underlies their beliefs there is one and only one reason why they are doing this: they have lost the argument.

LikeLike

Hi Claudius,

There are plenty of science topics that aren’t politicised and so they aren’t under attack. These things ebb and flow in response to national priorities in science and the political economic climate of the day. Some topics that strike a moral, emotional or political chord are more likely to enter into public debate. Science doesn’t have to be under attack in order for it to be meaningful.

Peer review is the academic benchmark for science. Claims that refute peer review science without peer reviewed scientific evidence do not pass the scholarly test. This is the definition of science. Arguing on emotion, or refuting science on the basis of personal world-view, values or belief, is simply personal opinion. That is not science. Science requires scientific evidence.

LikeLike

Sorry but you are wrong. You do not understand science. Peer review is the problem, not the solution. There is only one academic benchmark for science, and that is reproducible experimental evidence. What we see with climate change is advocacy for a cause, not science. They have nothing testable. Thus the reason they don’t/won’t debate.

LikeLike

This is a sociology website providing peer-review-based evidence and informed commentary. Climate change science has reached consensus amongst 97% of research scientists. This evidence is well documented, including by the IPCC, an international group of scientists, think tanks and research organisations. I’ve discussed this literature on my blog (https://othersociologist.com/2014/07/13/climate-change-denial/) and elsewhere. The articles that counter this scientific consensus have been shown to have been founded by companies with vested interests in fossil fuels as well as organisations with conservative politics. See the research by sociologist Robert Brulle: http://drexel.edu/now/archive/2013/december/climate-change/ (download study at the bottom of the article).

LikeLike

Science is determined by reproducible experimental evidence, not phoney statistics constructed by scientific pretenders that have a self-serving agenda.

Consensus is to science what conspiracy is to politics.

LikeLike

You are treading out conspiracy theories all over again, while I have pointed you to peer reviewed science that is internationally supported by experts. If you’d like to better understand your distrust of science, I write on this topic often, using peer reviewed research. Aside from the resources I’ve already pointed you to, see: https://othersociologist.com/2014/01/18/sociology-of-public-science/

My commenting policies are clear: I don’t allow vague attacks based on personal fears or conspiracies, but instead welcome informed discussion. I hope you take the time to learn from the resources I’ve pointed you to.

LikeLike

“Peer review is the academic benchmark for science. Claims that refute peer review science without peer reviewed scientific evidence do not pass the scholarly test.”

Well I don’t know about that.

There’s evidence to suggest that the peer-review process is susceptible to corruption and/or bias:

http://www.thesociologycenter.com/Suppressed.html

https://en.wikipedia.org/wiki/Sokal_affair

https://www.theguardian.com/science/2011/sep/05/publish-perish-peer-review-science

http://www.ncbi.nlm.nih.gov/pmc/articles/PMC1420798/

http://www.the-scientist.com/?articles.view/articleNo/34518/title/Opinion–Scientific-Peer-Review-in-Crisis/

Pages 155 – 159 of the following: https://books.google.com/books?id=FVrBAwAAQBAJ&pg=PA155&lpg=PA155&dq=corruption+in+peer+review+sociology&source=bl&ots=xLaLVNutEM&sig=8_zi43v2MH7FuV6zf85-YJswSEE&hl=en&sa=X&ved=0ahUKEwi9tKO0y7DMAhWKGD4KHfxRCXQQ6AEIMTAC#v=onepage&q=corruption%20in%20peer%20review%20sociology&f=false

So I wouldn’t say what you said above. For one, it appears to be a circular or self-fulfilling argument.

LikeLike

Hi kmgyening. I’ve answered this type of straw argument many times, including on the original threads on Google+ that lead to me writing this post. There are cases of flaws in the administration of peer review; as with all things in life, the peer review process is not perfect, but it is the best process we have to test the validity and reliability of research. As I’ve also noted many times, peer review does not end at the time of publishing; rather, it continues after publication, when a wider community of researchers can further test the data and analysis. This is exactly how the link between autism and vaccinations was exposed, which I’ve discussed on my blog.

The links you’ve provided do not really invalidate the peer review process. In brief:

Your first link is from a conspiracy theory blog that has no sociological merit. The author claims to be a sociologist but has no clear academic credentials and self-publishes materials that have no academic data or theoretical framing. In short, without peer reviewed or public research record, this is not a credible source.

The Sokal example which you linked to is from Wikipedia – not a peer review source by the way – and it describes a hoax published on Social Text at a time when the journal was not peer reviewed. So, in fact, this example proves the need for peer review. Moreover, a physicist’s attack on postmodern philosophy is rooted in epistemological fallacy: that the physical sciences can render the social sciences obsolete merely reflects how some physical scientists have a weak understanding of the history of science. Philosophy predates all other forms of science and was critical in developing the scientific tradition of enquiry and critical thinking. Physicists and anyone else who do not understand the importance of the philosophy of science have poor training. Regardless, this article does not make the point you think you are trying to make.

You’ve linked to an opinion piece by David Colquhoun, whose article criticises the “publish or perish” model of academia. This model is something that many researchers, including myself, are engaged in challenging. Specifically, Colquhoun critiques the “quack” journals that are not peer reviewed and which are set up by pariah companies looking to make a profit. Since you are clearly unfamiliar with academic science, there is a big difference between these hack for-profit-only journals and established academic publications that recruit qualified peer reviewers. The author then spends excessive space shredding one peer reviewed study about the benefits of acupuncture. The researchers of that study then respond to Colquhoun at the bottom of his article – which I suspect you have not read. Colquhoun is a professor of pharmacology; the two primary authors of the article he lambastes work in health services. Different scientific disciplines disagree, especially on holistic medicine. That’s fine. But one paper, despite its flaws (in this case a small sample size), does not invalidate all peer review research.

Richard Smith was an editor of a well-respected journal for over two decades. His critique of peer review is similar to the one above: he is opposed to the high costs of publishing. He is an advocate of open publishing. He does not argue that all peer review is corruptible. Instead, he points to some of the social biases within publication networks, such as gender bias, which my work also routine addresses. Again, the point is not that the peer review method can’t be trusted; it’s that mechanisms of academia make it more likely that some types of research papers will be given greater prestige. White men in English-speaking cultures, especially in Britain, the USA, and Australia, are more likely to be editors of journals that have historically dominated the sciences. White men are more likely to get bigger grants. They are therefore in a better position to be published. Women’s scientific contribution is undervalued in science, and this shows in the fact that the fields where there are more women are seen as less prestigious. This is a problem with the administration and social functions of publishing and academia, not of the scientific principles of peer review per se. Again, if you’d read the author’s critique, he’s not arguing that peer review should be abolished, he’s saying the system could be better managed through enhanced anonymous review; open access publishing; and better training of reviewers. Few scientists would argue with the need to improve the mechanisms of publishing; though not all of us embrace all these recommendations as presented by this particular author.

Professor Judith Curry is once again making the same points as the last two authors in a blog post where she is largely rehashing other bloggers’s writing, and responding tentatively to their arguments. Curry is looking for ways to improve peer review publications; she is not saying that the peer review model is corrupted, as you put it. In particular, she is interested in pre-publication avenues in open access journals.

Dariusz Leszczynski is talking about a specific and controversial Danish study on mobile phones. Other research suggests brain tumour rates have remained unchanged since mobile phones were introduced, which is the crux of the controversy, amongst other things. That’s beside the point here. What matters here is that, as I’ve said, one study that is being debated by the scientific community does not invalidate the basic principle that research should be peer reviewed.

Finally you link to Christian Smith’s book, which I have no doubt you have not read. The first clue is that you have simply googled the phrase “corruption in peer review sociology” which shows up in the link you’ve provided. The second clue is that Smith in no way represents the argument you are trying to make. Smith is involved in critical sociology; specifically, he argues that sociology is too focused on Durkheim’s tradition of the “sacred project” of sociology. That is, that dominant academic sociology aims to transform social problems in society in a narrow sense. His argument is interesting, but sadly in your case, his book has been roundly critiqued for lacking theoretical rigour. You know – what peer review offers. That’s okay because not all sociology books need to be academic, except that in this case, Smith is critiquing American sociology with the lens of an academic. Sociologists routinely critique our own discipline; I do this on this blog all the time, especially with regards to race and public sociology and applied sociology.

You are not a scientist, and so it’s okay that you don’t know how research is produced, critiqued, published and used. But because you are not a scientist you cannot expect to critique scientific processes with a list of hastily googled URLs. My blog often engages with a critique of scientific practices – this does not mean that peer review is all corrupt and all biased. Peer review is the best available system to quality check the theoretical underpinning of research, as well as checking data, analysis frameworks and outcomes before these are released to the public. Research practices should be routinely debated within the scientific community; and they are.

There are too many poor sources of information that the general public latch onto without understanding the credibility of the authors and data. Again; I write about this often on this blog and elsewhere. This is where peer review research in credible publications or analysis published by qualified professionals, are quintessential in shaping public education about the potential benefits, applications or limitations of research.

Finally, you’ve created a straw website to make yourself seem credible. This is exactly the type of shill tactics that peer review can circumvent. Anybody can create a shell website and post uninformed opinions. To be taken seriously, the data and analysis scientists present for publication must pass research ethics and standards and our academic credentials must be publicly available. You may choose to disbelieve peer review science as a non-expert, which is your prerogative. My article here has addressed the sociological reasons why this happens. You have decided not to reflect on the evidence presented, but all that does is continue to feed ignorance about how knowledge is refuted simply due to personal beliefs, rather than evidence.

LikeLike

Scientific methods are the “benchmark for science.” Peer review can be a way to load the deck to favor people who want to cheat the system, as is so rampant in climate sciences. Evidence without adherence to scientific methods is the fool’s gold of science.

LikeLike

Hi Claudius/solvingtornadoes. I ignored your comment here since I’d already answered you and then you came back to my site, logged in as your blog and commented again, so my longer response is above. My comment here is simply to repeat once again to future commenters (and those posting under multiple pseudonyms): unless you have credible peer reviewed evidence, your comments won’t be published because you add nothing new to the conversation. You can use your own blog to say whatever you like, but this is a site about the sociology of science. As such, misguided attempts to advocate climate denialism won’t be tolerated. Be well.

LikeLiked by 1 person

Beliefs are based on fuzzy logic of facts, or, in the case of religion, just belief. But fuzzy logic is what we use all the time for most everything, even in science (the Ptolemaic system of planets works, but Copernican system is easier, here is fuzzy logic at work).

LikeLike

Thanks for your comment Joey Brockert, but this is not correct. You are confusing lay definitions of belief and logic with scientific notions of these concepts. We operationalise what these words mean in science because they’re tied to methodologies and theories. What you call “fuzzy logic” in relation to belief is another way of saying “common sense” or “opinions.” In science, our scientific opinions and our scientific logic is informed by scientific practices, not belief. Belief does not require empirical evidence. Science does require this.

LikeLike

‘What you call “fuzzy logic” in relation to belief is another way of saying “common sense” or “opinions.” ‘ yes, this was my thinking – fuzzy logic gives the aura of logic to opinions and common sense whether it is logical or not. Fuzzy logic may not be the right term for my thinking here (pun?), but in science there is some fuzziness – the chemical structure of benzene was discovered in a fuzzy way (a dream). I suspect that a lot of math is researched starting out with the fuzzy notions that this sort of thing may work, i.e., Fermat’s

theorem started out because he thought he had something, but he could not have because the solution turns out to be one involves higher math than was in existence in his day. After the theories are developed, then the research for facts supporting or otherwise starts, which provides the basis for the opinions and logic that develops afterwards, as well as provides for more fodder for moire theories and discoveries.

LikeLike

Hi Joey Brockert Thanks for your comments! What you’re describing here is grounded theory of research (also known as the “bottom up” approach), where we collect data and then build our key theories as a result of analysis. You still have to have a set of research questions you’re trying to explore and a set research framework. Top down approach is the opposite, where we start with a specific hypothesis from which you develop your methods and conclusions. Both approaches have limitations and advantages.

The notion that science comes from dreams or just mucking around has been largely disproved. The cases where this happens are in a tiny minority, and they are largely overstated. Even the idea that Newtown “discovered gravity” after an apple fell on his head is wrong. An idea may come when we’re outside a lab or our office, but this is also a scientific process; it’s known as insight; when an answer comes to us that we’ve been trying to figure out for a long while.

The romantic notion of “discovery” is not quite as it seems. Is science creative? Yes! Is it sometimes flexible? Yes, that’s how we reach paradigm shifts and an entire body of knowledge goes in new directions. But it all follows from established methods and theories.

The reason I mention this is because the idea that science is about sudden inspiration that comes from nowhere (or what you’re calling “fuzzy logic”) is erroneous and sometimes dangerous.We see this type of thinking a lot on Science on Google+, where people think that science is about considering all possibilities, and that it’s about questioning everything, and that all new ideas are great. This leads to people questioning empirical evidence using their own personal feelings and logic, which is what my original post was about.

Sciences are disciplines for a reason: we need to follow set theories and methods and collect evidence for it to be science.

LikeLike

Zuleyka Zevallos Another interesting post! Thank you.

Your statement about beliefs reminded me why I both enjoyed the sociology classes I took, and they tended to frustrate me. Closer to drove me crazy to be honest!

You said, “In social science, the concept of belief is a statement that people think is either true or false”.

Please understand I was already forty by the time I took Intro. to Soc. So for me, “belief” was a word in contrast with “knowledge”. I know that 2+2=4, but that’s a fact, not a belief. Even though I think it’s true. And I believe it.

I’d be the first to acknowledge your definition is actually a pretty good dictionary definition as well. But it leads to an argument I had the other day. If you’d like to comment on this, I’d really appreciate it.

I was told that atheism was a belief. I said atheism is the opposite of, or a lack of belief. I was told that a person “believes” in atheism. I countered that the “a” before theist meant “not”, like the “a” before typical means not-typical. This quickly devolved to me trying to counter someone else’s word play. He insisted that not believing was believing.

The problem I see with your definition is that, according to his word play and your definition, he would be correct. Correct = true or false. That is, if you substitute correct with “belief”.

I guess I can’t get my mind around the definition. In my mind, when you believe something is true, you have a belief. If you think something is false, you don’t have a belief in it. I come from the computer science industry personally, and in computer science a Boolean value has two mutually opposite meanings.

I’m sorry, I’m rambling. Your input would be very appreciated though.

LikeLike

Terry Wendt Thanks for your excellent question! The issue here is not semantics so much as scientific meanings versus “lay” language. In sociology we “operationalise” meanings, meaning we assign very specific definitions of our terms to be studied, based on established theories.

So the social science meaning of belief is indeed as you recounted above: it is about what people hold to be true. Your confusion arises from your understanding of what it means to believe. Belief does not necessarily mean agreeing or disagreeing. It is not about the presence or absence of agreement with an idea. In social science, belief is about basic ideals that a person holds dear (what they think is true). Belief describes the ideas and morals that give people’s lives meaning. Belief is distinct from knowledge, as you’ve pointed out. Facts that we know to be valid and reliable are grounded in scientific evidence.

Belief is a concept we use to measure core ideals and morals that people use to anchor them to their social identities, to their communities and so on.

Most sciences are not really about “proving” if something is true. Scientific methods are used to test hypotheses. Karl Popper argued we can’t really “prove” statements, beliefs or ideas, we can only show them to be false, using empirical testing.

The dictionary definition of atheism is the absence of faith. But this is still technically a belief. Atheists believe that there is no God. We cannot prove whether God exists or not – but if we use science to test religious ideas, especially about cosmology (the creation of the world), these beliefs do not stand to scientific scrutiny. So; we can’t really measure whether “God” exists because of how this concept is theorised. God and other deities by definition must be trusted and they very rarely show themselves to mortals, so we can’t go around testing their presence. But we have overwhelming scientific evidence that the world was not made in 6 days (as well as disproving other religious ideas).

To give you another example: plenty of people say they believe in equality. But when we test this empirically, we see that behaviours do not always match these ideals. This doesn’t mean these people don’t believe in equality. They believe this to be true. But what this means is that 1) These individuals don’t understand equality robustly; and 2) Their actions do not match their ideals in observable ways.

The Census actually counts atheism as a religion. The idea that atheism is a religion makes atheists cranky, but religion does not necessarily mean dogma, scripture, prayer and so on. The sociological definition of religion is about the functions of beliefs about the sacred (spiritual issues and ideas). We also study religion as the answers or narratives people use to explain existential ideas (what is the meaning of life, how should we feel about death, what does it mean to act ethically, so on).

Whether a supreme being exists or not is a religious question. The answer – that a religious being does not exist – can therefore be classified as a religious belief.

There was a wonderful documentary in Australia a few years a go that explained religion as the experience of awe: the idea that something exists that is grander than ourselves. A number of well-known atheists refuted religious ideas, but they all noted that they experience a sense of wonder that guides their beliefs and values. For an astronomer, this is about pondering the vastness of the universe – about possibilities of knowing what is presently unknown. For environmentalists, this is about being in nature and experiencing the feeling that there is much more going on in the world than what we currently observe. They do not explain these experiences to some mythical figure – but is is about the experience of wonder, and about imagining answers to existential questions.

I hope this helps!

LikeLike

Thank you for writing this very interesting and important essay. I would love to hear your thoughts on how we should define what the scientific method is so as to make it resistant to misguided beliefs/attitudes/values.

LikeLike

Hi Jerry thanks for your comment! The scientific method is about clearly establishing a theoretical framework, following established methodologies, and presenting credible (e.g. peer-reviewed) evidence to back up an argument. I think these terms can often be confused in public discussions. In lay language a “theory” is seen as any idea. In science a theory is an established paradigm drawing on decades’ worth of scientific evidence. Therefore an uninformed opinion is not a scientific theory. A scientific theory is not about belief; it draws on academic studies.

Lay understandings about Western liberal democracy often draw on the idea that all opinions are free and equal to be expressed, even if they are lack a factual basis or even where it is hurtful to public discourse (or even abusive, such as targeting minorities). But when it comes to debating scientific findings, not all opinions are equal. Everyone is entitled to their beliefs, values and opinions, but these subjective positions cannot be used to dismiss science findings simply because we don’t like them. This is not science. Science does not require us to like it or to even believe in it; instead, we examine the evidence produced by experts, and additionally in sociology, we consider how knowledge is influenced by power relations (history, culture, gender, and so on).

Valid arguments arguments about science are based on data. Invalid arguments (that is, those that are simply wrong) are based on personal belief. For example, “I don’t believe in climate change.” Okay – where’s your evidence? Conspiracy theories, random videos or website links and generic articles written by non-experts does not actually count as scientific evidence. The international science community has reached consensus on this; 97% of climate scientists have weighed up the evidence and they agree that it’s caused by humans. The data are published in peer reviewed journals, now collated by an international body, the IPCC. We don’t need to “believe” the idea of anthropogenic climate change; we just look at the evidence produced by experts.

The main problem is that some people refuse to see how their personal ideologies shape their understanding of science. If someone reads about a study and they don’t like the conclusions, but they can’t back this up with scientific evidence, that’s their personal opinion. But it’s not valid science.

I hope this helps!

LikeLike

I would like to “Like” Dr. Zvallos’s comments and those of others (I just created an account), but I’m unable to do so. I am, it appears, able to comment, though. (I will most definitely NOT “Like” conspiracy-theory-oriented comments.)

LikeLike

Hi Laurarunyan3. Thanks for your comment and support. My blog is moderated because I get a lot of abuse but I didn’t realise this also affected readers’ ability to “like” comments. Thanks for reading!

LikeLike

Thanks Zuleyka!

You said: “Everyone is entitled to their beliefs, values and opinions, but these subjective positions cannot be used to dismiss science findings simply because we don’t like them. This is not science. Science does not require us to like it or to even believe in it; instead, we examine the evidence produced by experts, and additionally in sociology, we consider how knowledge is influenced by power relations (history, culture, gender, and so on).”

Is there a way to make this idea clearer? That is, innovation is selected from creative ideas. I imagine creative ideas to be outliers. Outliers are considered non-mainstream and could incur the wrath of established doctrines, which would include attitudes that would label creative ideas as “crazy”.

So, how does one justify reasons for further pursuit? How does one make a scientific argument that my idea is innovative and not crazy? How can we know whether personal ideologies are NOT shaping our understanding of science? How do we assess good from bad science under uncertainty?

LikeLike

Reblogged this on visionvoiceandviews.

LikeLike